The purpose of this example is to demonstrate how the software to be measured can be packaged in a Docker container for easier deployment on the SUT (system under test). Using Docker or other container technologies greatly simplifies the process of configuring the test environment and reproducing the measurement session for e.g. verification of the results.

Note that PowerGoblin can also be launched from a container, but that is a separate procedure. Here, instead of containerizing PowerGoblin, we containerize the software running on the SUT.

Original application

This program is designed in a specific way to generate a significant amount of load on a single CPU core using a single thread. As a by-product, it also consumes memory (RAM). It does not require or use network communication. This implies that the execution of the program is expected to increase energy consumption in terms of processing and memory usage. This is what we want to measure.

This result is somewhat generalisable, as the program is designed to perform at about the same speed every time (duration of the execution is ~2 seconds). As it is written in Python, the program should also run on all CPU architectures and operating systems without any issues.

The example is based on the following executable code (original.py) written in Python 3:

# Sample: Generates lists of random numbers and sorts them

import time

import random

def milli_time():

return round(time.time() * 1000)

def gen(n):

return [random.randint(1, 1000) for _ in range(n)]

def sort(arr):

if len(arr) <= 1:

return arr

pivot = arr[len(arr) // 2]

left = [x for x in arr if x < pivot]

middle = [x for x in arr if x == pivot]

right = [x for x in arr if x > pivot]

return sort(left) + middle + sort(right)

def do_computation():

start = milli_time()

msg = time.ctime()

print("Computing..", flush = True)

while milli_time() < start + 2000:

n = 100000

nums = gen(n)

sort(nums)

msg2 = time.ctime()

if msg != msg2:

print(msg2, flush = True)

msg = msg2

print("Done", flush = True)

do_computation()

Starting this program is straightforward and easy:

$ python original.py

Computing..

Thu May 01 10:58:41 2025

Thu May 01 10:58:42 2025

Thu May 01 10:58:43 2025

Done

Work plan

Modifying the program to be measurable and adapting it to run in a Docker container consists of the following steps:

- Creating a measurement report template

- The template is completely optional and its main purpose is to improve the value of the energy report.

- Adding PowerGoblin HTTP API calls around the original script

- We need to specify how a session / measurement / run is defined in terms of executable code, and to coordinate when the session / measurements / runs start and stop.

- Configuring the data collection with collectd in the SUT

- This is optional software based meter data (by default: RAM, CPU & network usage) collected from the SUT.

- Starting and stopping Collectd before and after measurement

- Collectd needs to run in the SUT and will not be managed by PowerGoblin.

- Uploading collectd & timestamp data to PowerGoblin via its HTTP API

- Collectd needs to run in the SUT and will not be managed by PowerGoblin.

- Configuring a Dockerfile for the program

- Dockerfile creates a Docker image. The use of Docker images makes the measurement process more straightforward and reproducible on other systems for the verification of results.

- Building the Docker image

- Docker images can be created manually or in a CI/CD pipeline.

- Performing the measurement

- The measurement process is initiated and coordinated by the modified script executing in a Docker container. Before executing the script, a PowerGoblin instance also needs to be active for the logging of the data.

Measurement process

We study the structure of the measurement process in this section. This is a typical measurement performed by an automated testing script. It has the following important properties which basically makes it suitable as a basis for scientific research:

- Fully documented process

- Reproducible procedure

- Open data

- Generated results usable for quantitative research

With Docker, the whole measurement session can be fully automated. Our example defines a Docker container that will automatically initiate the measurement process. The system can be configured to start the container on start-up or manually at any given time.

High level description

The idea is to define a session that consists of a single measurement that is run 4 times:

- Session (name: Docker example)

- Measurement (name: RandomNumbers)

- Run 1 (id: 1)

- Run 2 (id: 2)

- Run 3 (id: 3)

- Run 4 (id: 4)

- Measurement (name: RandomNumbers)

On high level we identify the following units required for this measurement: PC (PowerGoblin instance), SUT (executes the algorithms and measurement scripts). The measurement session should be fully deterministic without any exceptions. We perform the following tasks in a sequence:

| Unit | Task |

|---|---|

| All | Set up the hardware and software |

| PC | Launch PowerGoblin |

| SUT | Launch the Docker container |

| - | Wait for the measurement to finish.. |

| PC | Analyze the logs & plots |

Creating a measurement report template

PowerGoblin supports the generation of "official" energy reports. Such reports contain metadata such as the name, author, and description of the measurement. We can define the template using Markdown (file template.md):

---

name: Docker example

author: Captain Planet

---

The purpose of this example is to demonstrate how to move the execution

of the algorithm being measured to a Docker container.

Preparing a measurement requires defining the algorithm execution as

a measurement that can be repeated by making several runs to improve

its statistical significance.

In addition, the data collection for the measurement should be

coordinated with the execution of PowerGoblin.

Measurement script

The original script is useful in this example because all the functionality to be run is already encapsulated in functions. We only need to complement the script by initializing the measurement session before calling the original functions and finalizing the measurement after the measurement.

The original script does not have any side effects, so it also allows the same function to be run multiple times to produce statistically meaningful data.

Imports

We can use the existing PowerGoblin client library for building the interaction with the PowerGoblin instance. In the beginning of the script, we'll add imports for the library:

from powergoblin import PowerGoblin, Collectd

import os

The imported PowerGoblin class will have functionality for doing API calls. The Collectd object manages the background execution of the collectd process. The os library is also imported for reading the host's name and port that are provided via an environment variable (HOST).

Initialization

We'll add the following initialization code before the calls to the functionality to be tested:

runs = 4

host = os.environ['HOST']

pg = PowerGoblin(host)

pg.get("cmd/startSession")

pg.post_file("session/latest/import/template", "README.md")

cd = Collectd()

The hostname and port data is obtained from the environment variables. These will be later passed to the Docker container by the Docker runtime.

We start a new measurement session, submit the template describing the measurement, and initiate a background collectd process.

Runs

The next code starts the actual measurement and gives it a descriptive name RandomNumbers. The function do_computation is executed 4 times. We command PowerGoblin to start and stop a run around each such call. Finally, we stop the measurement.

pg.get("session/latest/measurement/start/RandomNumbers")

for run in range(runs):

pg.get("session/latest/run/start")

do_computation()

pg.get("session/latest/run/stop")

pg.get("session/latest/measurement/stop")

Finalization

At the end, we close the measurement. We first close collectd, then synchronize the clocks between the systems, then create an archive of the logs created by collectd, and send them to the PowerGoblin instance. Finally, we close the session, which will store it on disk as well.

cd.close()

pg.sync("session/latest/sync")

archive = cd.create_archive()

pg.post_file("session/latest/import/collectd", archive)

pg.get("session/latest/close")

Docker

Dockerfile

Docker creates an isolated sandbox to run the program. However, each program must be individually configured to include all the tool and library dependencies and other settings needed to run.

Here we use the Alpine Linux distribution as a base, where we install collectd (for logging), python3, and py3-requests (for doing the HTTP requests).

The configuration also copies the simulation.py, powergoblin.py, and README.md files just configured into the Docker container. In addition, we will finally describe in Docker syntax how to start the program (python3 simulation.py).

FROM alpine:latest

RUN apk update && \

apk add --no-cache python3 py3-requests collectd && \

rm /var/cache/apk/*

ADD simulation.py .

ADD powergoblin.py .

ADD README.md .

CMD [ "/usr/bin/python", "simulation.py" ]

Alpine is often recommended distribution for Docker images because the generated images are rather small. Sometimes applications are not compatible with the musl C library used by Alpine. Debian's "slim" base image (debian-slim:latest) might be a better starting point in those cases.

Building the Docker image

On the SUT, before the container can be started, you will either need to build a Docker image locally or pull the image from external sources (e.g. Docker Hub, GitLab). Note that running Docker requires a Docker installation and starting of the Docker service (along with its dependencies):

$ sudo systemctl --now start docker

A Docker image can be created locally in a straightforward manner:

$ docker build . -t sut-simu

The image is now shown in the Docker's local image database:

$ docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

sut-simu latest 7472c708c6ea 3 seconds ago 51.9MB

CI/CD script

Docker image building can also be delegated to the GitLab server as part of a CI/CD run:

image: alpine:latest

stages:

- publish

publish:

stage: publish

image: docker:latest

services:

- docker:dind

rules:

- if: $CI_COMMIT_TAG

script:

- docker login -u $CI_REGISTRY_USER -p $CI_REGISTRY_PASSWORD $CI_REGISTRY

- docker build -t $CI_REGISTRY_IMAGE:latest . -f Dockerfile

- docker push $CI_REGISTRY_IMAGE:latest

These images are stored in the GitLab's container registry. Due to the predicate if: $CI_COMMIT_TAG, the Docker image will only be created for tagged commits.

Performing the measurement

PowerGoblin

Before executing the measurement script, make sure PowerGoblin has been started:

$ powergoblin/bin/power

Since the process stays active, you may want to start a new terminal at this point.

Preparing the Docker image

To fetch the remotely built Docker image, you can pull it from GitLab's registry:

$ docker login registry.gitlab.utu.fi

$ docker pull registry.gitlab.utu.fi/...

A locally built image should be available automatically after building.

Starting a container

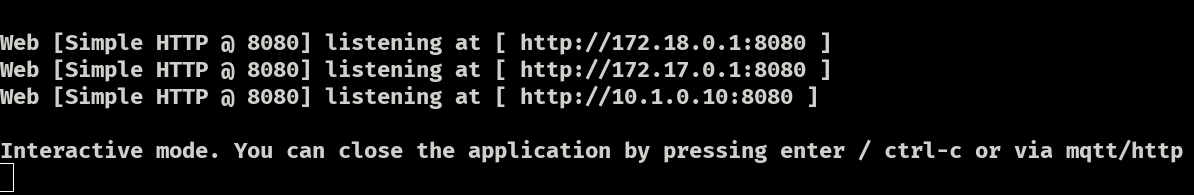

Here we assume that the PowerGoblin instance is running on the same computer (localhost:8080). In practice, the PowerGoblin instance should be located on another system because it will have an effect on the results. When starting PowerGoblin, it will list all the addresses it will be listening to:

We pass this information via a Docker variable which is analogous to passing this information via environment variable HOST:

$ export HOST=localhost:8080

$ python3 simulation.py

Launching a Docker container is straightforward:

$ docker run -e HOST=localhost:8080 --network host sut-simu:latest

As a result, the application is now executed 4 times and the Docker container will automatically initiate a measurement session, perform the measurement, and store the session on disk.

External links

- Project repository in UTU GitLab: tech/soft/tools/power/powergoblin/stuff/docker-example

- Distribution package:

utils.tar.gz